Neutrinos

by Joachim Pietzsch

„A new inhabitant of the heart of the atom was introduced to the world of physics today”, the New York Times reported from the AAAS meeting in Pasadena in June 1931, “when Dr. W. Pauli Jr. of the Institute of Technology of Zurich, Switzerland, postulated the existence of particles or entities which he christened ‘Neutrons’”.[1] At a time, when only protons, photons and electrons were known, this was the first public appearance of the particle, which - after the discovery of the real neutron in 1932 by James Chadwick (Nobel Prize in Physics 1935) - would become the neutrino. With a smaller circle of acquainted scientists Wolfgang Pauli had already shared his suggestion half a year earlier, in a letter from Zürich on 4 December 1930: “Dear Radioactive Ladies and Gentlemen”, he wrote to his colleagues who had gathered at a meeting in Tübingen, „I have hit upon a desperate remedy to save the ... law of conservation of energy...namely, the possibility that in the nuclei there could exist electrically neutral particles, which I will call neutrons...The continuous beta spectrum would then make sense with the assumption that in beta decay, in addition to the electron, a neutron is emitted such that the sum of the energies of neutron and electron is constant.“ [2]

A Daring defense of the Law of Energy Conservation

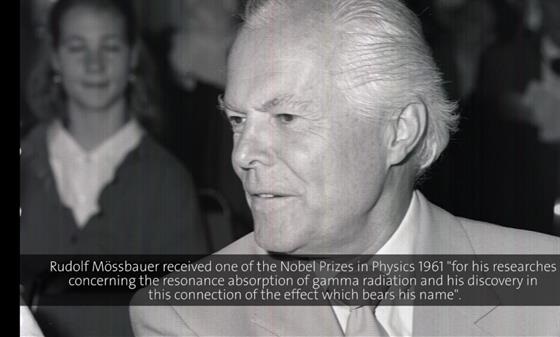

The problem Pauli “desperately” strived to solve with his proposal had been puzzling physicists for almost two decades. It had to do with a major difference between the three types of radioactive emission, which Chadwick had first demonstrated in 1914. While the spectra for the decay of alpha particles (equivalent to helium nuclei) and gamma rays (highly energetic electromagnetic waves) were discontinuous as expected, beta decay (emitting electrons) resulted in a continuous energy spectrum. That meant that the emitted electrons did not have the same energy every time – a phenomenon that contradicted the fundamental law of energy conversation. Was this law perhaps not valid in the special case of beta decay? Even Niels Bohr seriously considered such a partial breakdown of energy conservation – much to the anger of the very outspoken Wolfgang Pauli who struggled hard for a solution, as Rudolf Mößbauer (Nobel Prize in Physics 1961) vividly recalled in his Lindau lecture in 1982:

(00:00:42 - 00:04:27)

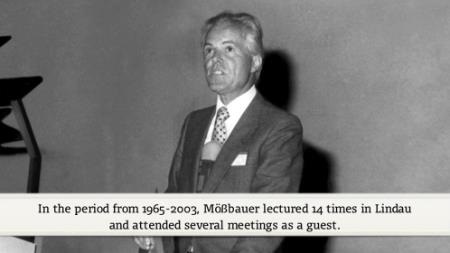

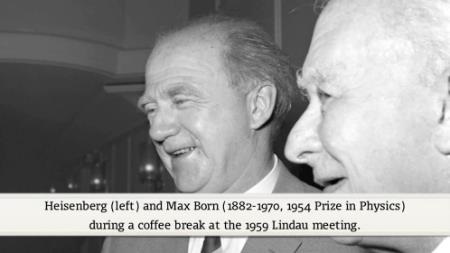

No Nobel Laureate has lectured more often on neutrino physics in Lindau than Rudolf Mößbauer, although this was not his original field of excellence. While doing research for his doctor’s thesis, Mößbauer had discovered and explained the unexpected effect of recoilless nuclear resonance fluorescence, which bears his name and allows for the spectroscopic measurement of extremely small frequency shifts in solids. This earned him a Nobel Prize in Physics 1961 when he was just 32 years old. “I fooled around for 15 years in the Mößbauer effect and then I’ve had it and left the field for neutrino research”, he told his audience in the last of the eight neutrino lectures he gave in Lindau between 1979 and 2001. Just like Mößbauer, Wolfgang Pauli did not receive the Nobel Prize for his contribution to neutrino research but for the discovery of an important law of nature, the exclusion principle, which he had made at the age of 25. It bears his name and states that two fermions cannot have the same spin and energy at the same time or in other words “that there cannot be more than one electron in each energy state when this state is completely defined“.[3] When he was awarded with the Nobel Prize in Physics 1945 for this achievement, neutrinos still had not been discovered, as if his own prophecy should fulfil: „I have done a terrible thing. I have postulated a particle that cannot be detected.“[4] Wolfgang Pauli is widely regarded as one of the most eminent and influential physicists of the 20th century. He attended the Lindau Meetings only once in 1956 without lecturing, and passed away prematurely in 1958. His close friend Werner Heisenberg who had almost all of his papers proof-read by Pauli before their publication paid tribute to him in 1959:

(00:00:15 - 00:03:42)

Nature Rejects Fermi’s “Theoretical Masterpiece”

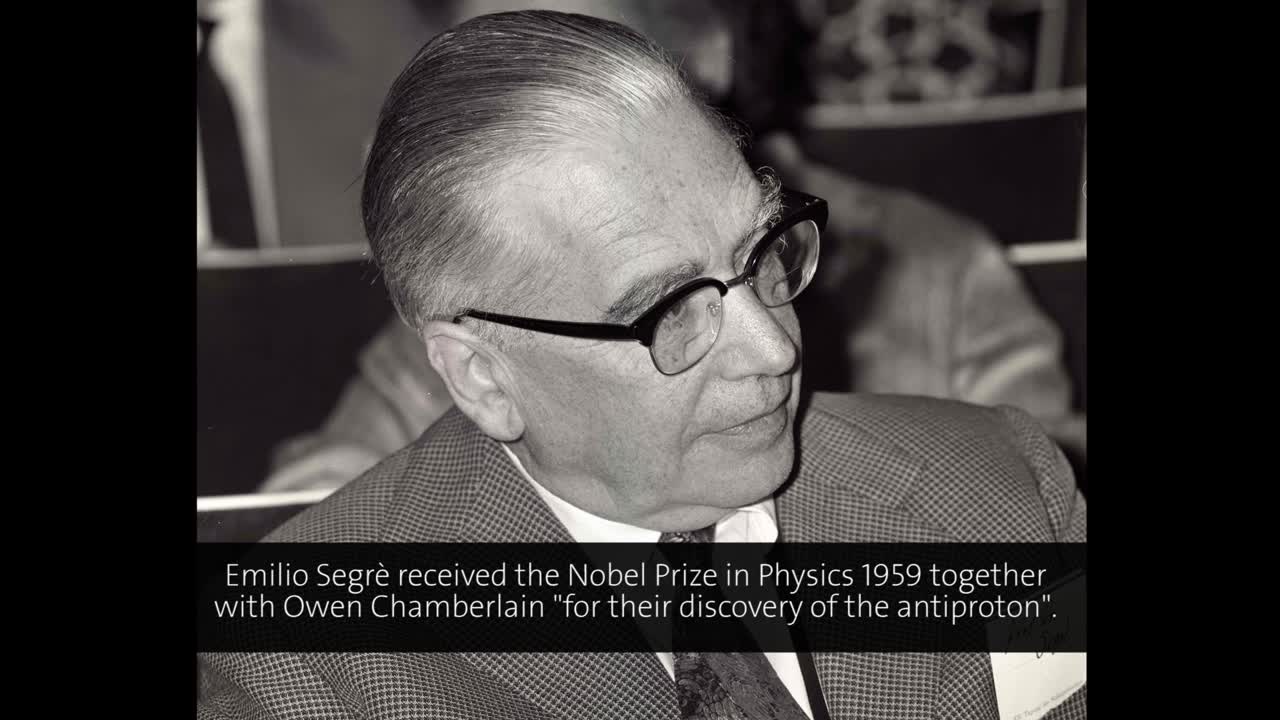

After his return from the AAAS meeting and other lectures in the US, Wolfgang Pauli attended a conference on nuclear physics, which Enrico Fermi and his group had organized in Rome to enhance their knowledge in this promising new area of research. They did so very successfully, and already in 1938 Fermi would receive the Nobel Prize in Physics “for his demonstrations of the existence of new radioactive elements produced by neutron irradiation, and for his related discovery of nuclear reactions brought about by slow neutrons". As Pauli later recalled, Fermi “immediately expressed a lively interest in my idea and a very positive attitude toward my new neutral particles”.[5] After the discovery of the neutron by Chadwick in early 1932[6], it was Fermi who proposed to name the much smaller particle postulated by Pauli “neutrino”, i.e. the little neutron. On occasion of the 7th Solvay Conference on Physics, which was dedicated to the structure and properties of the atomic nucleus and took place in Brussels in October 1933, the neutrino issue was discussed, and Fermi hypothesized that the neutrino does not per se exist within the nucleus but rather emerges as a result of beta decay, an assumption that laid the foundation for the theory of the weak interaction. As Nature rejected Fermi’s paper on this subject as too “speculative”, he first published it in an Italian journal. Fermi’s first doctoral student Emilio Segrè who shared the Nobel Prize in Physics 1959 with Owen Chamberlain “for their discovery of the antiproton” regarded this paper as Fermi’s “theoretical masterpiece”, as he mentioned in Lindau in his historical account of those years:

(00:28:18 - 00:32:23)

According to Fermi’s theory of beta decay a neutron transforms into a proton by emitting an electron and an (anti-)neutrino. Likewise, a proton can transform into neutron by emitting a positron and a neutrino. The first happens during nuclear fission in reactors, the latter during nuclear fusion in the sun. Billions of solar neutrinos per square centimeter reach and cross the earth every second. Second only to photons, neutrinos are the most abundant particles we know. Some of them were also created in the Big Bang, others stem from supernova explosions or from the interaction of cosmic rays with the earth atmosphere or can be generated in high energy accelerators. Neutrinos are everywhere. For every atom in the universe, there are approximately a billion neutrinos. But why are they then so difficult to detect that they once were dubbed “ghost particles”? Because they are the only elementary particles that are subjected only to the weak interaction without being disturbed by one of the other fundamental forces (aside from gravitation). They hardly ever interact with matter. They almost never collide with a proton to form a neutron and a positron. They travel through sun and earth unhindered and fly through our bodies without doing harm or leaving traces. Nevertheless, they are of utmost importance for our existence. Without the weak force and without neutrinos, the sun would not shine but explode and no life could have developed, as Rudolf Mößbauer points out:

(00:12:02 - 00:13:33)

“We Have Definitely Detected Neutrinos”

Chargeless as they are, neutrinos cannot be detected directly. In 1956, however, Frederick Reines and Clyde Cowan, probably encouraged by previous considerations of Bruno Pontecorvo, succeeded in proving the neutrino’s existence. They set up their experiment close to the Savannah River nuclear reactor where sufficient amounts of (anti)neutrinos were produced to give them a chance to hit upon a signal in the scintillator fluid of their detection tank once in a while. Their reasoning was as follows: When an (anti)neutrino collides with a proton, a neutron and a positron is generated in an inverse beta decay. The positron will meet an electron in the fluid, resulting in both’ annihilation and a gamma ray signal. The neutron will tumble through the fluid until being absorbed after typically five microseconds by a nucleus that consequently flashes out a gamma ray signal. Hence, would they record two gamma ray signals with an interval of precisely five microseconds, they had indirectly discovered a neutrino. Carlo Rubbia (Nobel Prize in Physics 1984 together with Simon van der Meer “for their decisive contributions to the large project, which led to the discovery of the field particles W and Z, communicators of weak interaction") explained this experiment in one of his Lindau lectures:

(00:01:29 - 00:03:20)

“We are happy to inform you that we have definitely detected neutrinos from fission fragments by observing inverse beta decay of protons”, Cowan and Reines wrote in a telegram to Wolfgang Pauli on 14 June 1956. “The message reached Pauli at a conference in Geneva. He interrupted the proceedings and read the telegram out loud.”[7] On the agenda of the Royal Swedish Academy of Sciences, though, neutrinos had no priority then. Reines received a share of the Nobel Prize in Physics almost forty years later, in 1995, when his colleague Cowan had long passed away. The first Nobel Prize in Physics, which was explicitly related to neutrino research, was awarded in 1988 in equal shares to Leon Lederman, Melvin Schwartz and Jack Steinberger, amazingly not for the discovery of the first, but for the second type of neutrino, the muon neutrino. The muon is an unstable particle with a lifetime of roughly two microseconds. It has more than 200 times more mass than the electron, and had originally been discovered in the mid 1930s. The discovery of its neutrino in the early 1960s was important because it established the existence of a second family of elementary particles. In the mid 1970s Martin Perl discovered the tau as the major lepton of the third family of particles. For this achievement he shared the Nobel Prize in Physics 1995 with Frederick Reines. In 2000, finally, the tau neutrino was discovered by the DONUT collaboration at Fermilab. In contrast to electron neutrinos, muon and tau neutrinos are not produced in the sun or in nuclear reactors, but in laboratory accelerators or in exploding stars. Along with their respective six-packs of quarks the three lepton pairs constitute the three families of elementary particles, which are themselves integral parts of the standard model of particle physics. Its basic construction was elegantly outlined by Steven Weinberg (Nobel Prize in Physics 1979), one of its founding fathers, in the first of this two Lindau lectures:

(00:01:11 - 00:10:37)

An Amazing Contradiction Between Theory and Experiment

In the standard model, which was completed in the mid 1970s, neutrinos are massless particles. Yet this assumption had already been questioned in 1957, when Bruno Pontecorvo suggested that neutrinos might have some mass. This “neutrino mass problem” remained unsolved until the beginning of the 21st century, much like the “solar neutrino problem”. The latter was revealed by John Bahcall and Raymond Davis in 1968. They built up an experiment that drew upon a method suggested by Pontecorvo, and used a big chlorine tank to detect solar neutrinos. Whenever a chlorine atom interacted with a neutrino, it would be transformed into radioactive argon whose signal could be caught. Their original intention was “to test whether converting hydrogen nuclei to helium nuclei in the Sun is indeed the source of sunlight”.[8] In each of these reactions four protons are transformed into two protons and two neutrons while emitting two positrons and two neutrinos. Using a detailed computer model of the sun, Bahcall calculated both the number of neutrinos, which should arrive on earth, and the number of argon-mediated signals that they should trigger in the 380.000-liter tank filled with the common cleaning fluid tetrachloroethylene. This tank was located one mile below the ground in an old gold mine to avoid disturbing signals and enable the scientists to distinguish true neutrino signals from false ones. To their big surprise, however, their experiment failed to prove the prediction. They detected only about one third as many radioactive argon atoms as were predicted. Where had all the missing neutrinos gone? In 1969, it was again Pontecorvo who first proposed an answer to this question that pointed into the right direction.[9] But most of the theoreticians were not prepared to accept this answer, and the experimental possibilities were not yet advanced enough to provide the required data. When Raymond Davis (at the age of 88!) whose experiment ran until 1994, and Masatoshi Koshiba shared one of the two Nobel Prizes in Physics 2002 "for pioneering contributions to astrophysics, in particular for the detection of cosmic neutrinos" both expressed their regret that Bahcall had been left out. Bruno Pontecorvo had died already nine years before.

Masotoshi Koshiba and his team had constructed the Kamiokande detector in a mine in Japan. It consisted of an enormous tank filled with water. „When neutrinos pass through this tank, they may interact with atomic nuclei in the water. This reaction leads to the release of an electron, creating small flashes of light. The tank was surrounded by photomultipliers that can capture these flashes. By adjusting the sensitivity of the detectors the presence of neutrinos could be proved and Davis's result was confirmed.“[10] In February 1987 a supernova explosion was observed that had taken place some 160.000 years before in a neighboring galaxy to the Milky Way called the Large Magellanic Cloud („it was the nearest and the brightest supernova seen in 383 years – since Johannes Kepler observed a supernova in our own galaxy with his naked eye in 1604“[11]). Such an explosion releases most of its enormous energy as neutrinos, and Koshiba’s group was able to detect twelve of the estimated 10.000 trillion neutrinos that passed through their water tank. This was the birth of neutrino astrophysics, as Koshiba titled his talk in Lindau in 2004, where he also introduced the construction of a larger detector, the Superkamiokande, with a much increased sensitivity to cosmic neutrinos.

(00:00:01 - 00:04:44)

A Convincing Solution Around the Turn of the Century

The Superkamiokande (Super-K) is designed to capture neutrinos that are created in reactions between cosmic rays and the Earth’s atmosphere. It can detect both neutrinos coming straight from the sky above and neutrinos coming from below after having travelled through the entire globe. The balance of both should be equal, because neutrinos pass through the earth unhindered. For electron-neutrinos this was indeed the case, but not for muon-neutrinos, as Super-K researchers first reported in 1998. They detected more muon-neutrinos coming from the atmosphere a few kilometers straight above than from down under. The latter, of course, had a much longer journey behind them. This had obviously given some of them the time to change their identity and switch into tau-neutrinos, which could however not be observed in the Super-K. Yet this was possible in the Sudbury Neutrino Observatory (SNO) in Canada. In contrast to the Super-K it is designed to measure solar neutrinos, and its tank is not filled with ordinary but with heavy water. The deuterium isotopes therein allowed scientists to measure either the amount of electron-neutrinos alone or the grand total of all three neutrino types. Both sums should have been equal, because the sun emits electron-neutrinos only. Yet they weren’t: Only one third of the expected electron-neutrinos arrived. The grand total, on the other hand, matched the expected number of incoming neutrinos. This provided evidence that solar electron-neutrinos oscillate and partly change their identities in flight. When the SNO scientists published their results in 2001 and 2002, John Bahcall’s theoretical calculations were vindicated[12]. The solar neutrino problem was solved. It is caused by neutrino oscillations. Such oscillations are only possible when neutrinos have mass. Although we still cannot figure how much mass, the Super-K and the SNO experiments had killed two birds with one stone. For this achievement, the scientists Takaaki Kajita and Arthur McDonald were awarded the Nobel Prize in Physics 2015.

Clues to the Big Unanswered Questions

Since the breakthrough of Kajita and McDonald and their teams, neutrinos have taken center stage in physics. For many decades, neutrino research was regarded cutting-edge but somewhat esoteric. This has changed dramatically since they apparently offer clues to some of the major unanswered questions both in particle physics and in cosmology. How much attention they have caught and how much prospects for future research they offer became visible in several lectures at the Lindau Nobel Laureate Meeting 2015, three months before the Nobel Prize for Kajita and McDonald was announced.

Brian Schmidt, for example, who shared the Nobel Prize in Physics 2011 with Saul Perlmutter and Adam Riess "for the discovery of the accelerating expansion of the Universe through observations of distant supernovae" mentioned neutrinos - is there a fourth type? - in conjunction with the mystery of dark matter:

(00:19:32 - 00:24:34)

David Gross, co-recipient of the Nobel Prize in Physics 2004 “for the discovery of asymptotic freedom in the theory of the strong interaction", showed how the discovery that neutrinos have mass casts doubt on the standard model:

(00:23:06 - 00:25:05)

Francois Englert, finally, who shared the Nobel Prize in Physics 2013 with Peter Higgs “for the theoretical discovery of a mechanism that contributes to our understanding of the origin of mass of subatomic particles, and which recently was confirmed through the discovery of the predicted fundamental particle, by the ATLAS and CMS experiments at CERN's Large Hadron Collider", shared his perspective of the unknown beyond the discovery of the Higgs boson and put neutrinos in a context with quantum gravity and the birth of the universe:

(00:27:55 - 00:31:50)

Revisiting Majorana’s Legacy

Given the importance of the questions raised in these lectures, it is quite understandable that the experimental setup of neutrino research is getting more and more complex. In December 2010, the IceCube Neutrino Observatory in Antarctica was completed. It is designed to detect neutrinos from cataclysmic cosmic events that have energies a million times greater than nuclear reactions and thus to explore the highest-energy astrophysical processes. Its 5.160 digital optical sensors are distributed over one cubic kilometer under the Antarctic ice. Its experimental goals include the detection of point sources of high-energy neutrinos, the identification of galactic supernovae, and the search for sterile neutrinos, a hypothetical fourth type of neutrino that does not interact via any of the fundamental interactions of the Standard Model except gravity and could help to explain dark matter. About 300 scientists at 47 institutions in twelve countries are involved in IceCube research. Between 2010 and 2012 the IceCube detector revealed the presence of a high-energy neutrino flux containing the most energetic neutrinos ever observed. “Although the origin of this flux is unknown, the findings are consistent with expectations for a neutrino population with origins outside the solar system”[13].

One of the most exciting questions of current neutrino research is whether neutrinos are their own antiparticles. Ettore Majorana had suggested this possibility already in the 1930s. The experimental proof for this daring hypothesis would be the discovery of a phenomenon called “neutrinoless double beta decay”, in which two neutrons decay together, so that “the antineutrino emitted by one is immediately absorbed by the other. The net result is two neutrons disintegrating simultaneously without releasing any antineutrinos and neutrinos”.[14] In this case, in contrast to a cardinal rule of the standard model, the lepton number would not be conserved. The standard model had to be fundamentally revised. And more than that: The neutrino’s two-sides-of-one-coin-identity could explain the symmetry breaking between matter and antimatter shortly after the Big Bang and thus establish the reason for the existence of our universe.

While the discovery of the Higgs particle has been hailed as the justification of the standard model, the discovery that neutrinos have mass shows in any case that the standard model has not yet been sufficiently worked out to describe the world by physical equations. „The experiments have revealed the first apparent crack in the Standard Model“, the Royal Swedish Academy of Sciences stated with regard to the Nobel Prize in Physics 2015[15]. This is a good sign for future insights and a strong motivation for young researchers – or, as legendary singer-songwriter Leonard Cohen once expressed it: “There is a crack in everything. That’s how the light gets in.”

Footnotes:

[1] quoted by Jayawardhana R. The Neutrino Hunters. Oneworld Publications, London, 2015, p. 42

[2] For the full text of the original German letter, in which Pauli’s humor is captured best, see Mößbauer R. (Ed.) History of Neutrino Physics: Pauli’s Letters. Proc. Neutrino Astrophysics, 20, 24 Oct. 1997, p. 3-5.

Both the full German text and its English translation are accessible under http://microboone-docdb.fnal.gov/cgi-bin/RetrieveFile?docid=953;filename=pauli%20letter1930.pdf

[3] http://www.nobelprize.org/nobel_prizes/physics/laureates/1945/press.html

[4] Pauli reportedly had said so to his colleague Walter Baade, cf. Jayawardhana R., l.c., p. 42 and 132

[5] Cf. Jayawardhana R., l.c., p. 44

[6] James Chadwick. Possible Existence of a Neutron. Nature Feb. 27, 1932, p.312

[7] Cf. Jayawardhana R., l.c., p. 72

[8] cf. http://www.nobelprize.org/nobel_prizes/themes/physics/bahcall/, p.2 (John Bahcall. Solving the mystery of the missing neutrons)

[9] Gribov VN and Pontecorvo BM. Neutrino astronomy and lepton charge. Phys. Lett. B 28, 493-496 (1969)

[10] cf. http://www.nobelprize.org/nobel_prizes/physics/laureates/2002/popular.html

[11] Cf. Jayawardhana R., l.c., p. 120

[12] „For three decades people had been pointing at this guy “, Bahcall said in an interview,“and saying that this guy is the guy who wrongly calculated the flux of neutrinos from the Sun, and suddenly that wasn’t so. It was like a person who had been sentenced for some heinous crime, and then a DNA test is made and it’s found that he isn’t guilty. That’s exactly the way I felt. “quoted by Jayawardhana R., l.c., p. 107

[13] Aartsen et al. Evidence for High-Energy extraterrestrial neutrinos at the IceCube detector. Science, 342, Issue 6161, 22 November 2013

[14] Jayawardhana R., l.c., p. 160

[15] http://www.nobelprize.org/nobel_prizes/physics/laureates/2015/popular-physicsprize2015.pdf

Additonal Mediatheque Lectures Associated with Neutrinos:

Rudolf Mößbauer 1979: "Neutrino Stability"

Rudolf Mößbauer 1985: "Rest Masses of Neutrinos"

Hans Dehmelt 1991: "That I May Know the inmost force…"

Leon Lederman 1991: "Science Education in a Changing World"

Melvin Schwartz 1991: "Studying the coulomb state of a pion and a Muon"

James Cronin 1991: "Astrophysics with Extensive Air Showers"

Rudolf Mößbauer 1994: "Characteristics of Neutrinos"

Martin Perl 1997: "The Search for Fractional Electrical Charge"

Rudolf Mößbauer 1997: "Neutrino Physics"

Rudolf Mößbauer 2001: "Masses of the neutrinos"

Martinus Veltman 2008: "The Development of Particle Physics"